Enterprise Architecture has always served as the backbone for digital transformation. However, the pace of technological change has accelerated dramatically. The shift from monolithic on-premise systems to distributed cloud-native environments, combined with the integration of Artificial Intelligence into core business processes, demands a new approach to modeling. ArchiMate, as a standard for enterprise architecture description, faces the challenge of adapting to these dynamic conditions without losing its structural integrity.

This guide explores how the ArchiMate language is positioned to handle modern complexities. We examine the structural shifts required to model cloud infrastructure, the semantics needed to represent AI capabilities, and the implications for governance in automated environments. The focus remains on the framework itself, ensuring a robust understanding of how architectural models can remain relevant in an era of continuous change.

🔄 The Shift from Static to Dynamic Modeling

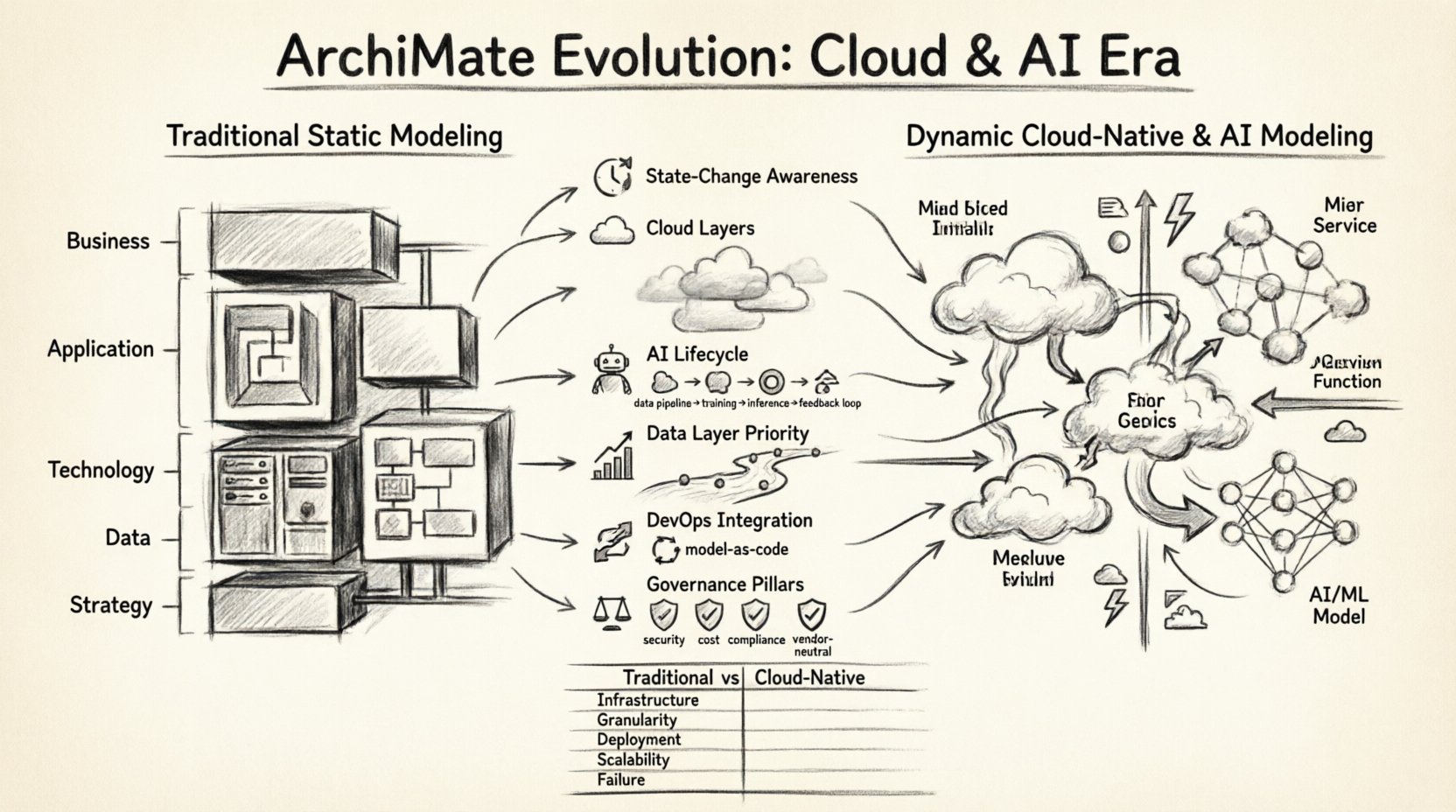

Traditional architectural modeling often relied on static snapshots. A diagram represented the state of the system at a specific point in time. In modern cloud environments, this approach is insufficient. Infrastructure is ephemeral. Services scale automatically. AI models retrain continuously. The architecture is not a fixed blueprint; it is a living system.

To address this, the ArchiMate framework is evolving to support dynamic interactions. The following points outline the necessary shifts in perspective:

- State-Change Awareness: Models must account for transient states rather than just static configurations. A cloud instance may exist only for the duration of a transaction.

- Event-Driven Relationships: Interactions are increasingly triggered by events rather than scheduled processes. ArchiMate expressions need to capture these triggers clearly.

- Abstraction Layers: The boundary between the Application Layer and the Technology Layer is blurring in serverless environments. Modeling must reflect this fluidity.

- Data Flow Visibility: In AI-driven systems, data movement is the primary value driver. Architecture must prioritize data lineage alongside service interaction.

These shifts require architects to move beyond simple block diagrams. The modeling language must support the representation of behavior, not just structure. This aligns with the core philosophy of ArchiMate, which has always emphasized the connection between business and technology, but now extends that connection into the operational runtime.

☁️ Cloud-Native Architecture Modeling

Cloud computing introduces a specific set of challenges for architectural representation. Microservices, containers, and serverless functions create a level of granularity that traditional enterprise architecture diagrams struggle to capture without becoming cluttered. The evolution of ArchiMate in this context focuses on abstraction and grouping.

When modeling cloud-native systems, specific considerations apply to the Application and Technology layers:

- Microservices: Instead of treating a monolithic application as a single node, architects must represent individual services as distinct application components. Relationships between these services often involve asynchronous messaging, which requires specific connector types.

- Containers: Containers represent a deployment technology that abstracts the underlying hardware. ArchiMate modeling should distinguish between the application software and the container runtime environment to clarify dependencies.

- Serverless: Function-as-a-Service (FaaS) models challenge the concept of persistent application components. The model must represent functions as transient processes rather than long-running services.

- Infrastructure as Code: The definition of infrastructure is becoming code. Architectural models should ideally map to the declarative templates used to provision resources, ensuring consistency between the design and the implementation.

Comparison: Traditional vs. Cloud-Native Modeling

| Aspect | Traditional On-Premise | Cloud-Native |

|---|---|---|

| Infrastructure Ownership | Fixed hardware, dedicated servers | Ephemeral, shared resources, virtualized |

| Service Granularity | Monolithic applications | Microservices, functions |

| Deployment Model | Manual or scripted deployment | CI/CD pipelines, automated provisioning |

| Scalability | Vertical scaling (larger machines) | Horizontal scaling (more instances) |

| Failure Mode | Hardware failure leads to downtime | Designed for failure, automatic recovery |

Understanding these distinctions is critical for accurate documentation. If a model treats a cloud function as a permanent application component, it creates a false sense of stability. The notation must reflect the transient nature of the technology.

🤖 Integrating Artificial Intelligence

The integration of AI into enterprise systems introduces a new category of capabilities that standard ArchiMate diagrams did not originally anticipate. AI is not just a tool; it is a capability that influences decision-making, automation, and customer interaction. Modeling AI requires defining the lifecycle of models, the data required for training, and the inference engines used at runtime.

Modeling AI Capabilities

To represent AI effectively within the framework, architects should consider the following elements:

- Machine Learning Models: These should be represented as Application Components or Services. They possess specific behaviors, such as “Predictive Analysis” or “Image Recognition,” which map to Business Services.

- Training Data Pipelines: The flow of data required to train a model is a distinct architectural concern. This involves data sources, preprocessing steps, and storage repositories. This data flow must be traced through the Data Layer.

- Inference Endpoints: The runtime interface where the AI model interacts with the business process. This is typically a Web Service or API.

- Feedback Loops: AI systems often improve over time. The architecture must model the feedback mechanism where real-world outcomes are fed back into the training process.

By explicitly modeling these components, organizations can assess the dependencies and risks associated with AI implementation. For instance, if a specific data source is required for training, the model makes that dependency visible to stakeholders. This visibility is essential for compliance and risk management.

📊 The Data Layer in the Age of AI

Data is the fuel for both cloud applications and AI systems. In traditional architecture, the Data Layer was often secondary to the Application Layer. In modern architectures, data is often the primary asset. The ArchiMate framework places significant emphasis on the Data Layer to ensure that information flows are correctly mapped.

When evolving for cloud and AI contexts, the Data Layer requires specific attention:

- Data Governance: As data moves across cloud boundaries and AI systems, governance policies must be modeled. This includes access rights, encryption, and retention policies.

- Data Lakes vs. Warehouses: The distinction between storage for processing (data lakes) and storage for reporting (data warehouses) must be clear in the model. AI often relies on the lake, while business reporting relies on the warehouse.

- Real-Time vs. Batch: AI inference often requires real-time data, while training might use batch data. The architecture must support both throughput requirements.

- Semantic Interoperability: Different AI models may use different data schemas. The architecture must define the mapping between these schemas to ensure the business process understands the output.

Mapping ArchiMate Layers to Cloud/AI Stack

| ArchiMate Layer | Cloud/AI Equivalent | Key Modeling Focus |

|---|---|---|

| Business Layer | Business Capabilities & Services | Value delivery, customer interaction |

| Application Layer | Microservices, AI Models, APIs | Functionality, logic, orchestration |

| Technology Layer | Cloud Infrastructure, Containers | Hardware, network, runtime environment |

| Data Layer | Data Stores, Databases, Repositories | Information assets, lineage, governance |

| Strategy Layer | AI Strategy, Cloud Roadmap | Goals, principles, drivers |

This mapping helps ensure that the abstraction levels remain consistent. It prevents the common error of mixing infrastructure details with business capabilities in the same diagram.

🔗 Integration with DevOps and Continuous Architecture

The speed of cloud deployment aligns with DevOps methodologies. Architecture cannot be a gatekeeper that slows down delivery. It must be integrated into the development lifecycle. This concept is often referred to as Continuous Architecture.

For ArchiMate to support this, the modeling process must shift:

- Model as Code: Architectural definitions should be stored in version control systems alongside application code. This allows for automated validation of architectural constraints.

- Automated Compliance: Policies defined in the architecture can be checked against deployed infrastructure. If a deployment violates the model, the pipeline should flag it.

- Real-Time Synchronization: The architectural model should ideally reflect the actual state of the system. In cloud environments, manual updates to diagrams are prone to drift. Automation is required to keep the model accurate.

- Collaboration: Architects, developers, and operations teams must share the same model. Silos between these groups lead to misalignment in cloud environments.

This integration ensures that the architecture remains a living document rather than a historical artifact. It supports the agile nature of modern software development while maintaining the strategic oversight required for enterprise stability.

⚖️ Governance and Compliance in Automated Environments

As systems become more automated, the risk of configuration drift increases. Governance must be proactive rather than reactive. The ArchiMate framework provides a structure for defining governance rules and principles.

Key areas for governance in the cloud and AI age include:

- Security Posture: Security controls must be modeled as part of the architecture. This includes identity management, network segmentation, and encryption standards.

- Cost Management: Cloud costs can spiral without visibility. The architecture should model cost centers and resource allocation to enable financial governance.

- Regulatory Compliance: Regulations regarding data residency and AI ethics are tightening. The model must capture where data resides and how decisions are made by automated systems.

- Vendor Lock-in: Reliance on specific cloud provider services can create lock-in. The architecture should model abstraction layers to minimize dependency on proprietary features.

By embedding these governance concerns into the model, organizations can ensure that compliance is a design requirement, not an afterthought. This approach reduces the friction between innovation and regulation.

🛠️ Future Proofing the Architecture

The technology landscape will continue to evolve. New paradigms will emerge beyond current cloud and AI trends. To maintain relevance, the architectural modeling approach must remain adaptable.

Strategies for future-proofing include:

- Focus on Principles: Principles are more stable than technologies. Modeling based on fundamental architectural principles ensures longevity.

- Modular Design: Design systems that can be updated independently. This allows the architecture to evolve without requiring a complete rewrite.

- Standardization: Adhering to open standards like ArchiMate ensures that the models remain understandable and portable across different tools and organizations.

- Continuous Learning: Architects must stay informed about emerging technologies. The framework should be updated to incorporate new concepts as they mature.

📝 Summary of Implications

The evolution of ArchiMate in the context of cloud and AI represents a maturation of the enterprise architecture discipline. It moves from a static documentation tool to a dynamic modeling language capable of describing complex, automated systems. The focus on data, the recognition of ephemeral infrastructure, and the integration of AI capabilities ensures that the framework remains a valuable asset for organizations navigating digital transformation.

Adopting these evolving modeling practices requires a shift in mindset. It demands that architects view the system as a continuous flow of value rather than a collection of static components. By leveraging the full depth of the framework, organizations can achieve clarity in their complex environments. This clarity supports better decision-making, reduces risk, and accelerates the delivery of business value.

The path forward involves collaboration between technical teams and business leaders. It requires a shared understanding of the architecture that transcends tool-specific implementations. As the digital ecosystem continues to expand, the ability to model these relationships accurately will remain a critical competency for enterprise success.