Modern software engineering relies on a delicate balance between speed and stability. In an Agile environment, where iterations are short and feedback loops are tight, the need for robust quality assurance is paramount. Test-Driven Development (TDD) offers a structured approach to writing code that aligns perfectly with these requirements. By shifting the focus from verification to prevention, teams can build systems that are resilient, maintainable, and adaptable to change.

This guide explores the mechanics of implementing TDD within an Agile framework. It moves beyond surface-level definitions to examine the practical application of writing tests before code, the cultural shifts required, and the specific strategies for integrating this discipline into sprint cycles without sacrificing velocity.

Understanding the Core Philosophy 🧠

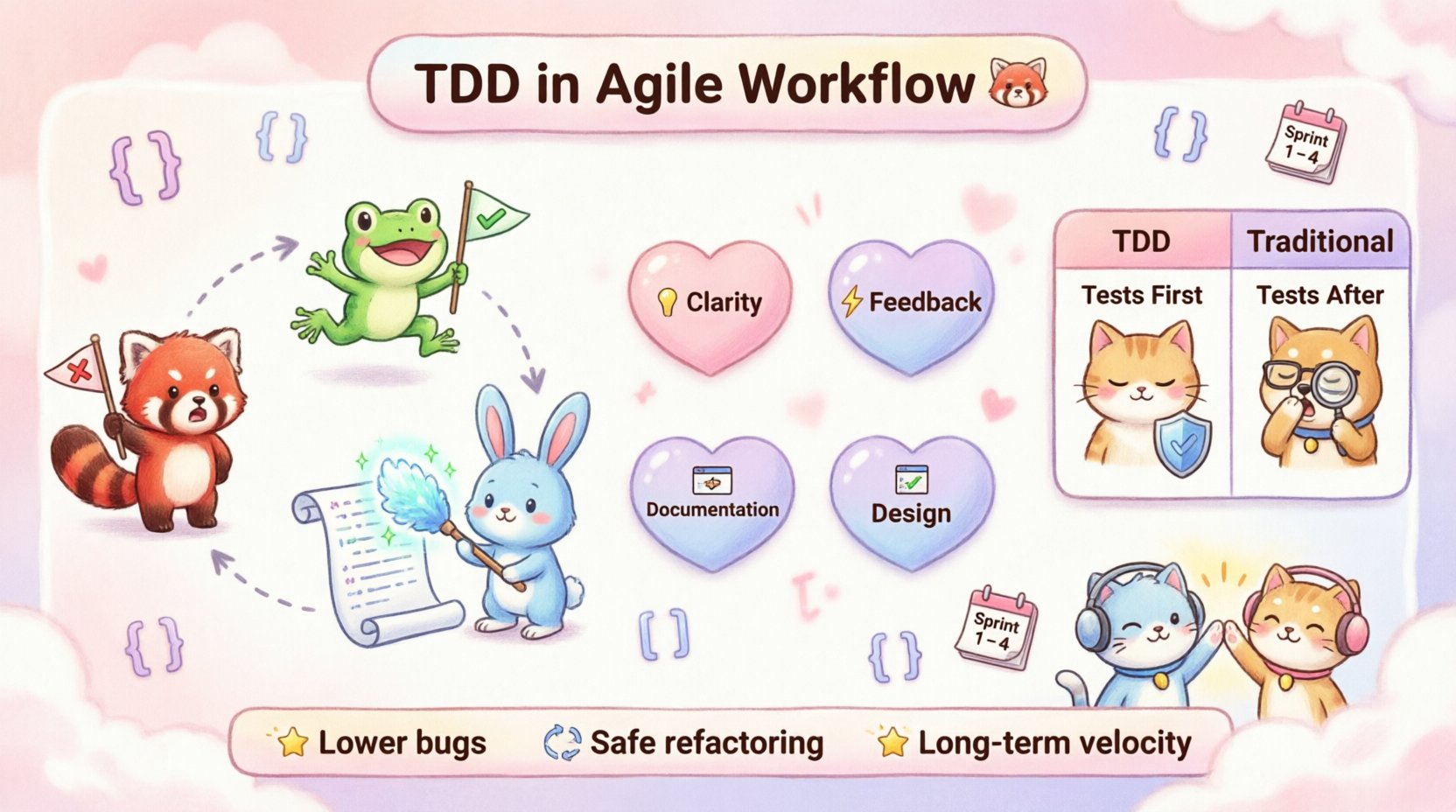

Test-Driven Development is not merely a testing strategy; it is a design methodology. When developers write tests first, they are forced to clarify requirements before writing implementation details. This process ensures that every line of code serves a specific, validated purpose.

In an Agile context, TDD acts as a safety net. It allows teams to refactor code with confidence, knowing that the existing test suite will catch regressions. This confidence is essential when working in sprints that demand frequent delivery. The primary goal is not just to find bugs, but to guide the design of the software itself.

Clarity: Writing a test forces the developer to define the expected behavior explicitly.

Feedback: Immediate feedback on code correctness reduces the time spent debugging.

Documentation: Tests serve as living documentation that stays synchronized with the codebase.

Design: The requirement to test code often leads to looser coupling and higher cohesion.

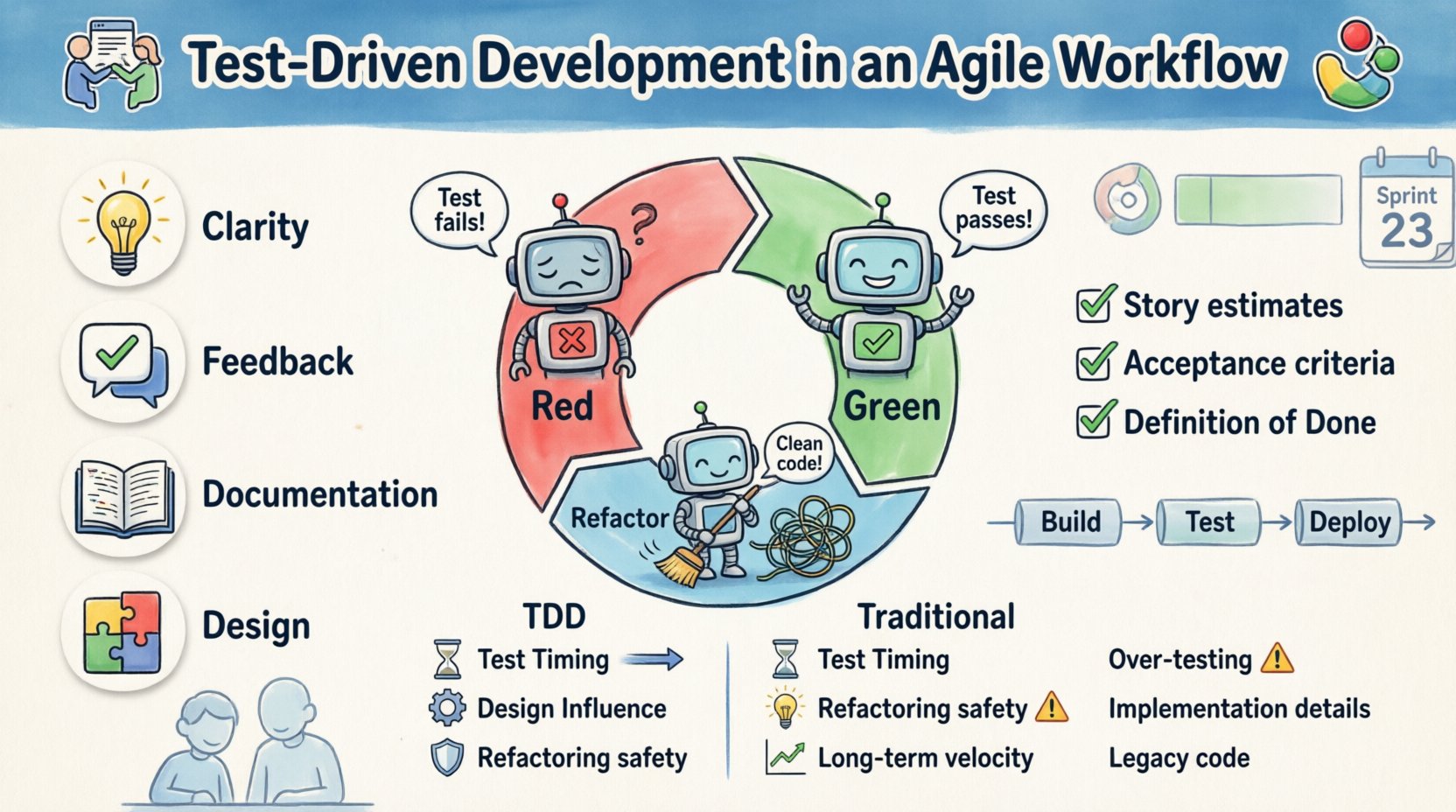

The Red-Green-Refactor Cycle 🔴🟢

The heartbeat of TDD is a repetitive loop consisting of three distinct phases. Understanding the nuance of each phase is critical for effective implementation.

1. Red: Write a Failing Test

The process begins by writing a small, specific test that describes a desired piece of functionality. At this stage, the code does not exist, so the test must fail. This failure confirms that the test is valid and capable of detecting the new feature. It is crucial to keep the test narrow; attempting to verify too much functionality in a single test makes debugging difficult.

Identify the specific behavior to be added.

Write the test assertion.

Run the test suite to confirm the failure.

2. Green: Make It Work

Once the test is failing, the goal is to write the minimum amount of code necessary to make the test pass. This phase discourages over-engineering. Developers should not add extra features, handle edge cases that are not currently tested, or refactor at this stage. The focus is solely on passing the specific test written in the Red phase.

Write the simplest code to satisfy the test.

Do not worry about code aesthetics yet.

Run the test to confirm it passes.

3. Refactor: Clean the Code

With a passing test, the developer now has the freedom to improve the code structure. Since the tests act as a safety net, any changes that break functionality will be caught immediately. This phase involves renaming variables, removing duplication, and simplifying logic. The key constraint is that the test suite must remain green throughout this process.

Apply design patterns to improve readability.

Remove any duplicated logic.

Ensure the test suite still passes.

Integrating TDD into Sprint Planning 📅

Integrating TDD into an Agile workflow requires adjustments to how work is estimated and planned. Traditional estimation methods often assume a linear progression from design to coding to testing. TDD merges these steps, which can alter velocity metrics initially.

Adjusting Story Estimates

When a user story is selected for a sprint, the team must account for the time spent writing tests. While TDD often reduces the time spent on debugging later, the initial coding phase takes longer. Teams should view test writing as an integral part of the implementation, not a separate task. If a story is too large to be broken down into small, testable units, it should be split further.

Defining Acceptance Criteria

Acceptance criteria in Agile serve as the contract between stakeholders and the development team. In a TDD environment, these criteria become the source of the test cases. This alignment ensures that what is delivered matches what was requested. Every acceptance criterion should ideally map to at least one automated test.

Criteria must be testable and unambiguous.

Tests should cover positive and negative scenarios.

Non-functional requirements (like performance) should also be tested where feasible.

Collaboration and Pair Programming 👥

TDD is often most effective when practiced collaboratively. Pair programming, where two developers work at one workstation, complements TDD naturally. One developer drives by writing the code, while the other navigates by reviewing the tests and the design.

This dynamic creates a continuous review process. The navigator can suggest edge cases to test before they are implemented. They can also spot design smells early, ensuring the code remains clean. This collaboration reduces the knowledge silos common in large teams and ensures that test coverage is comprehensive.

Defining “Done” with Quality in Mind ✅

In Agile, a user story is not complete until it meets the Definition of Done (DoD). When TDD is the standard, the DoD must explicitly include passing unit tests. This shifts the burden of quality from a final gate to an ongoing process.

If a story does not have tests, it cannot be marked as done. This prevents technical debt from accumulating. It ensures that every piece of code integrated into the main branch is verified. This rigor protects the team from regression issues that often plague releases.

Unit tests must pass for all new functionality.

Integration tests must verify component interaction.

No new code is merged without test coverage.

Managing Technical Debt 🛠️

One of the misconceptions about TDD is that it slows down development. In reality, it is a primary tool for managing technical debt. By refactoring continuously, teams prevent the codebase from becoming brittle. When the code is easy to change, the cost of technical debt remains low.

However, refactoring requires discipline. It is easy to fall back into writing spaghetti code when under pressure. The test suite provides the justification for refactoring. If a developer feels the need to simplify a module, they know they can do so safely because the tests will validate the behavior.

Common Pitfalls and How to Avoid Them ⚠️

Despite its benefits, TDD is not a silver bullet. Teams often encounter specific challenges that can undermine the process if not addressed.

1. Over-Testing

Writing too many tests can slow down the development process. Tests should focus on behavior, not implementation details. If a test is tightly coupled to the internal structure of a class, it will break whenever that structure changes, even if the behavior remains the same.

Focus on public interfaces and observable outcomes.

Avoid testing private methods directly.

Keep tests fast and independent.

2. Testing Implementation Details

Developers may write tests that verify specific variable names or internal logic. This creates fragility. When the code is refactored, these tests fail, forcing the developer to update the test rather than the code. Tests should describe what the system does, not how it does it.

3. Ignoring Legacy Code

Applying TDD to existing systems can be difficult because there is no test suite to start with. In these cases, teams should focus on writing tests around new features first. Over time, as code is touched, tests can be added to cover legacy sections. This is known as “Strangler Fig” refactoring.

Measuring Success and Metrics 📊

How do you know if TDD is working? Relying solely on code coverage percentages is insufficient. High coverage does not guarantee high quality. Instead, focus on metrics that reflect stability and velocity.

Defect Leakage: The number of bugs found in production should decrease over time.

Refactoring Frequency: Teams should feel comfortable refactoring code regularly.

Build Stability: The main branch should rarely be broken.

Feedback Loop Time: The time from writing code to knowing if it works should be minimal.

TDD vs Traditional Development 🆚

Understanding the differences between TDD and traditional development helps clarify the value proposition. The table below outlines the key distinctions.

Aspect | Test-Driven Development | Traditional Development |

|---|---|---|

Test Timing | Before implementation | After implementation |

Design Influence | Tests guide design | Design guides tests |

Refactoring | Safe and frequent | Risky and infrequent |

Documentation | Living code (tests) | Separate documents |

Debugging Time | Reduced | Higher |

Initial Velocity | Slower | Faster |

Long-term Velocity | Higher | Lower (due to debt) |

Continuous Integration and TDD 🔗

Automated testing is the foundation of Continuous Integration (CI). When TDD is combined with CI, the feedback loop becomes instantaneous. Every time a developer pushes code, the CI server runs the full test suite. If any test fails, the build is marked as broken.

This automation prevents the accumulation of bugs. It ensures that the codebase remains in a deployable state at all times. Without TDD, the test suite may grow to be too slow or too brittle to run frequently. With TDD, the tests are designed to be fast and reliable, making them ideal for CI pipelines.

Run tests on every commit.

Block merges if tests fail.

Provide immediate feedback to developers.

Automate deployment to staging environments.

Scaling TDD Across Teams 🏢

As teams grow, maintaining consistency in TDD practices becomes a challenge. Standardization is key. Teams should agree on naming conventions, test structures, and directory layouts. This consistency reduces the cognitive load when switching between tasks or team members.

Knowledge sharing is also vital. Senior developers should mentor juniors on the nuances of writing effective tests. Workshops and internal tech talks can help spread best practices. Over time, TDD becomes a cultural norm rather than a mandated process.

The Human Element of TDD 👥

Finally, it is important to recognize the psychological impact of TDD. Writing tests first can feel counterintuitive. Developers are trained to solve problems, not write specifications. It takes time to shift this mindset. Teams should allow for a learning curve without penalizing initial velocity.

Patience is required. The benefits of TDD are often realized after the initial phase of building the test suite. Once the suite is established, the cost of change decreases significantly. This long-term view is essential for Agile teams that plan to maintain software over years.

Encourage a culture where failing tests are seen as helpful signals, not failures of the developer. When a test fails, it means the system is protecting itself. This perspective shift reduces anxiety and promotes a healthier development environment.

Final Thoughts on Sustainable Quality 🏁

Adopting Test-Driven Development in an Agile workflow is a commitment to sustainable engineering. It requires discipline, patience, and a willingness to change established habits. However, the return on investment is a codebase that is easier to understand, easier to change, and easier to trust.

By prioritizing quality from the start, teams can focus on delivering value rather than fixing errors. The cycle of Red-Green-Refactor becomes a rhythm that drives the project forward. With the right tools and a supportive culture, TDD transforms software development from a chaotic endeavor into a predictable, reliable process.

Start small. Pick a single feature and apply the TDD cycle. Observe the impact on design and confidence. Gradually expand the practice across the team. The goal is not perfection, but continuous improvement. In the world of Agile, staying adaptable and maintaining high standards are the only ways to ensure long-term success.