In the fast-paced environment of Agile development, ambiguity is the enemy of progress. When a team receives a user story without clear boundaries, expectations diverge, leading to rework, delayed releases, and frustration. Acceptance criteria and the Definition of Done are not just administrative tasks; they are the foundational contracts between stakeholders and the development team. They define what success looks like before a single line of code is written.

This guide explores the mechanics of crafting precise acceptance criteria and establishing a robust Definition of Done. We will examine how these elements drive quality, reduce waste, and ensure that every sprint delivers tangible value. By the end of this document, you will understand how to structure your backlog to minimize ambiguity and maximize delivery confidence.

🧩 Understanding Acceptance Criteria vs. Definition of Done

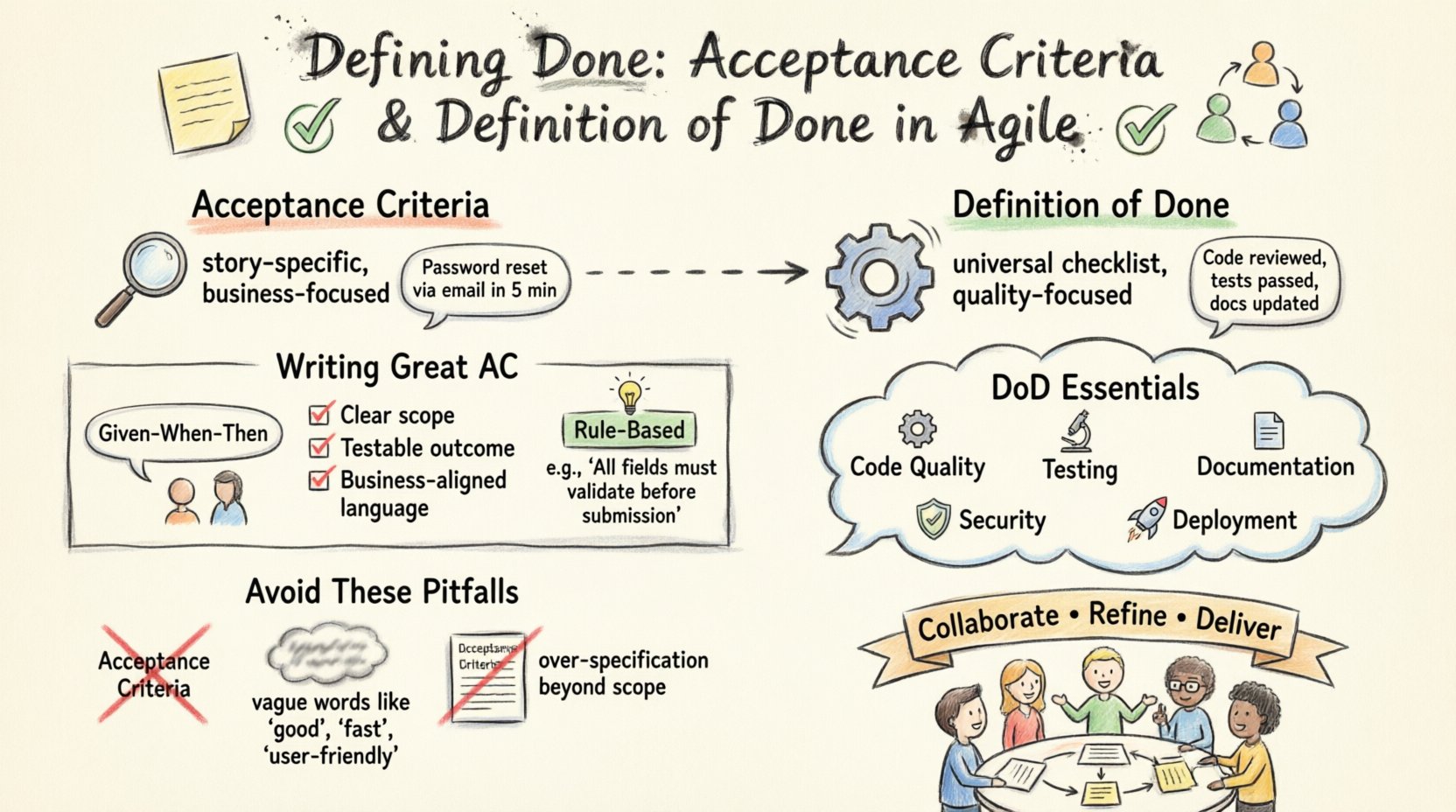

While often used interchangeably by those new to the methodology, Acceptance Criteria (AC) and Definition of Done (DoD) serve distinct purposes. Confusing the two can lead to stories that are technically complete but do not meet business needs, or stories that are business-ready but fail technical standards.

What are Acceptance Criteria?

Acceptance Criteria are a specific set of conditions that a user story must satisfy to be considered complete from a business perspective. They are unique to each story. If a story is about “logging in,” the ACs define what constitutes a successful login attempt. If a story is about “viewing a dashboard,” the ACs define what data is displayed and how it updates.

Scope: Specific to the individual user story.

Purpose: To verify functional behavior and business value.

Ownership: Typically defined by the Product Owner in collaboration with the team.

Example: “The system shall allow users to reset their password via email within 5 minutes.”

What is the Definition of Done?

The Definition of Done is a shared understanding of what it means for work to be complete across the entire project. It is a checklist that applies to every story, regardless of its content. It represents the quality baseline of the product.

Scope: Applicable to all work items in the backlog.

Purpose: To ensure consistent quality and technical integrity.

Ownership: Owned collectively by the Development Team.

Example: “Code has been reviewed, unit tests passed, and documentation updated.”

Feature | Acceptance Criteria | Definition of Done |

|---|---|---|

Granularity | Specific to one story | Universal for all stories |

Focus | Business Functionality | Technical Quality & Standards |

Evolution | Changes per story | Static or evolves slowly |

Example | “Button turns green on click” | “No console errors present” |

📝 The Anatomy of a High-Quality Acceptance Criterion

Writing effective acceptance criteria requires a shift from vague desires to measurable conditions. A criterion is not a task; it is a testable condition. When criteria are weak, the testing phase becomes a guessing game. When they are strong, the testing phase becomes a verification process.

Characteristics of Effective Criteria

To ensure clarity, acceptance criteria should adhere to specific principles. These principles help the team avoid misinterpretation and ensure that everyone shares the same mental model of the feature.

Unambiguous: Avoid words like “fast,” “easy,” or “user-friendly.” Use specific metrics instead, such as “loads in under 2 seconds” or “requires 3 clicks to complete.”

Testable: If you cannot write a test case for it, it is not a valid criterion. Every criterion must result in a Pass or Fail outcome.

Complete: Cover happy paths, edge cases, and negative scenarios. What happens if the input is empty? What happens if the network fails?

Independent: While stories can depend on other stories, the criteria for one story should not rely on the criteria of another to be valid.

Valuable: Focus on what the user experiences. Technical implementation details are usually better suited for the Definition of Done or technical notes.

Writing Techniques

There are structured approaches to writing criteria that improve consistency across the team. Using these formats reduces cognitive load when reviewing backlog items.

1. The Given-When-Then Format

Also known as Gherkin syntax, this format structures criteria into a scenario. It separates the context, the action, and the expected outcome.

Given: The initial state or context.

When: The event or action taken by the user.

Then: The observable result that confirms the feature works.

Example:

Given the user is logged in with an active subscription

When they navigate to the billing page

Then the current plan and next renewal date are displayed

2. The Checklist Format

For simpler stories, a direct list of conditions is often sufficient. This format is best for UI tweaks or straightforward data updates.

Verify the “Submit” button is disabled when the form is empty.

Ensure the error message appears in red text below the input field.

Confirm the API response returns a 200 status code.

3. The Rule-Based Format

Some features rely heavily on business logic. Listing these rules explicitly prevents logic errors during development.

Discounts apply only to items with a price greater than $10.

Users under 18 cannot access the premium tier.

Maximum file upload size is 10MB.

🤝 Collaborative Refinement

Acceptance criteria are not written in isolation. They are the product of collaboration. The Product Owner brings the business context, while the Development Team brings the technical feasibility perspective. This collaboration happens during Backlog Refinement sessions.

Who Should Be Involved?

While the Product Owner is the primary author of the criteria, their value increases significantly when others contribute.

Product Owner: Defines the “What” and the “Why.” Ensures the criteria reflect user needs.

Developers: Identify technical constraints. They clarify what is possible within the current architecture.

QA / Testers: Focus on edge cases. They ask, “What breaks this?” and “How do we measure success?”

Designers: Ensure visual and interaction criteria match the design specifications.

When to Refine?

Refinement is an ongoing activity, not a one-time event. The goal is to ensure that stories are ready for the next Sprint Planning. A common rule of thumb is to have 50% to 75% of the next Sprint’s backlog refined and ready to go.

Early Stage: Broad strokes. Focus on the main value proposition and high-level flows.

Mid Stage: Detailing edge cases and specific data requirements.

Pre-Sprint: Final review. Ensuring no ambiguity remains before commitment.

⚠️ Common Pitfalls and How to Avoid Them

Even experienced teams struggle with acceptance criteria. Recognizing common mistakes allows you to course-correct before they impact delivery.

1. Writing Tasks Instead of Criteria

A common error is listing implementation steps. “Create a database table” is a task. “Data persists across sessions” is a criterion. Tasks belong in the development plan, not the acceptance criteria.

2. Over-Specification

Providing too much detail can stifle innovation. If you tell developers exactly how to solve a problem, you limit their ability to find better solutions. Focus on the behavior, not the mechanism.

3. Neglecting Non-Functional Requirements

Performance, security, and accessibility are often overlooked. A feature that works but is insecure or inaccessible is not done. Include criteria for:

Performance: “Page loads in under 2 seconds.”

Accessibility: “Screen readers can navigate the form.”

Security: “Passwords are hashed before storage.”

4. Vague Language

Words like “optimized,” “robust,” or “modern” are subjective. Replace them with measurable standards. “Optimized” becomes “Reduces API calls by 20%.” “Robust” becomes “Handles 1,000 concurrent users without error.”

🔄 The Definition of Done: Ensuring Consistency

While Acceptance Criteria ensure the feature works for the user, the Definition of Done ensures the code is safe to release. A DoD acts as a gatekeeper. If a story does not meet the DoD, it cannot be moved to “Done,” regardless of whether the Acceptance Criteria are met.

Components of a Strong Definition of Done

A comprehensive DoD covers the full lifecycle of a code change. It should be visible to everyone, often displayed on a physical board or a digital dashboard.

Code Quality: No code smells, linting checks passed, complexity thresholds met.

Testing: Unit tests written and passing, integration tests passed, manual testing verified.

Documentation: User documentation updated, API docs refreshed, internal knowledge base linked.

Security: Dependency scan passed, no hardcoded secrets, vulnerability scan cleared.

Deployment: Code merged to main branch, deployed to staging, verified in production environment.

Refining the Definition of Done

The DoD is not static. As the team matures and technology changes, the DoD should evolve. If a new testing tool is adopted, the DoD should reflect the requirement to use it. If a security standard is updated, the DoD must align.

Regular Review: Discuss the DoD during Retrospectives. Is it too heavy? Is it too light?

Incremental Growth: Add items gradually. Do not double the DoD overnight. This prevents bottlenecks.

Team Consensus: The team must agree on the DoD. If developers feel it is impossible, they will bypass it, defeating its purpose.

📈 Measuring Impact and Quality

Investing time in defining Done and Acceptance Criteria yields measurable returns. Teams that prioritize clarity see improvements in velocity, predictability, and quality.

Key Metrics to Track

Defect Escape Rate: The number of bugs found in production. Clear criteria reduce the likelihood of logic errors escaping to users.

Rework Percentage: How much work is being undone or modified after initial completion. Ambiguous criteria often lead to rework.

Definition of Done Compliance: How many stories are marked “Done” that actually met the full DoD checklist.

Refinement Time: Time spent discussing criteria. While this takes time upfront, it reduces time spent clarifying during development.

Feedback Loops

The quality of your criteria can be assessed through feedback loops. If a QA engineer frequently finds issues that should have been covered by the criteria, the criteria need refinement. If developers frequently ask clarifying questions during development, the criteria need more detail.

Use the Retrospective to discuss these issues. Ask the team:

Did we misunderstand any stories?

Were there edge cases we missed?

Was the DoD achievable within the sprint timebox?

🛠️ Practical Implementation Steps

Implementing a robust system for Acceptance Criteria and Definition of Done requires a structured approach. Follow these steps to integrate these practices into your workflow.

Step 1: Establish the Baseline

Start by defining the minimum Definition of Done. What is the absolute bare minimum required to consider code safe? This might include “Compiles,” “Runs on Local,” and “Basic Tests.” Get the team to agree on this baseline immediately.

Step 2: Train on Writing Criteria

Conduct workshops to teach the team how to write Given-When-Then scenarios. Use real stories from the backlog as practice material. This ensures everyone understands the expected format and depth.

Step 3: Integrate into the Workflow

Make the criteria a mandatory field in your tracking system. Stories without criteria cannot be moved to “Ready for Sprint Planning.” This enforces the discipline without requiring micromanagement.

Step 4: Review During Planning

Dedicate time in Sprint Planning to review the criteria for selected stories. If a story is unclear, do not commit to it. Push it back to refinement. This protects the team from over-committing to ambiguous work.

Step 5: Continuous Improvement

Review the criteria after the sprint. Did they hold up? Did they catch the issues they were meant to catch? Update the templates and standards based on these findings.

🌟 Moving Forward

Clear acceptance criteria and a solid Definition of Done are not shortcuts; they are the bedrock of reliable Agile delivery. They transform development from a guessing game into a predictable process. By investing time upfront to define what success looks like, teams reduce waste, improve morale, and deliver higher quality software.

The journey to clarity is continuous. It requires discipline to stick to the standards and courage to push back on vague requirements. As you refine your processes, you will find that the time spent defining Done is time saved in debugging, rework, and stakeholder management. Focus on precision, foster collaboration, and let the quality of your criteria drive the quality of your product.