In the landscape of software delivery, the user story is the fundamental unit of value. It represents a promise of functionality between the business and the development team. However, a promise without clear terms is merely a hope. When acceptance criteria (AC) are vague, the entire development lifecycle suffers. Ambiguity introduces technical debt before a single line of code is written, leading to rework, missed deadlines, and frustrated stakeholders.

This guide provides a deep dive into identifying, diagnosing, and resolving blurry acceptance criteria. We will move beyond surface-level advice to establish a robust framework for quality assurance and definition of readiness. By the end of this article, you will possess the authority to refine stories to a standard where testing is inherent, not an afterthought.

📉 The Hidden Cost of Ambiguity

Why does this matter? Many teams operate under the assumption that developers can read minds or that ambiguity will be resolved during the coding phase. This is a dangerous fallacy. Every hour spent clarifying requirements during development is an hour subtracted from building, testing, and deploying. The cost of fixing a bug found in production is exponentially higher than fixing a misunderstood requirement in the planning phase.

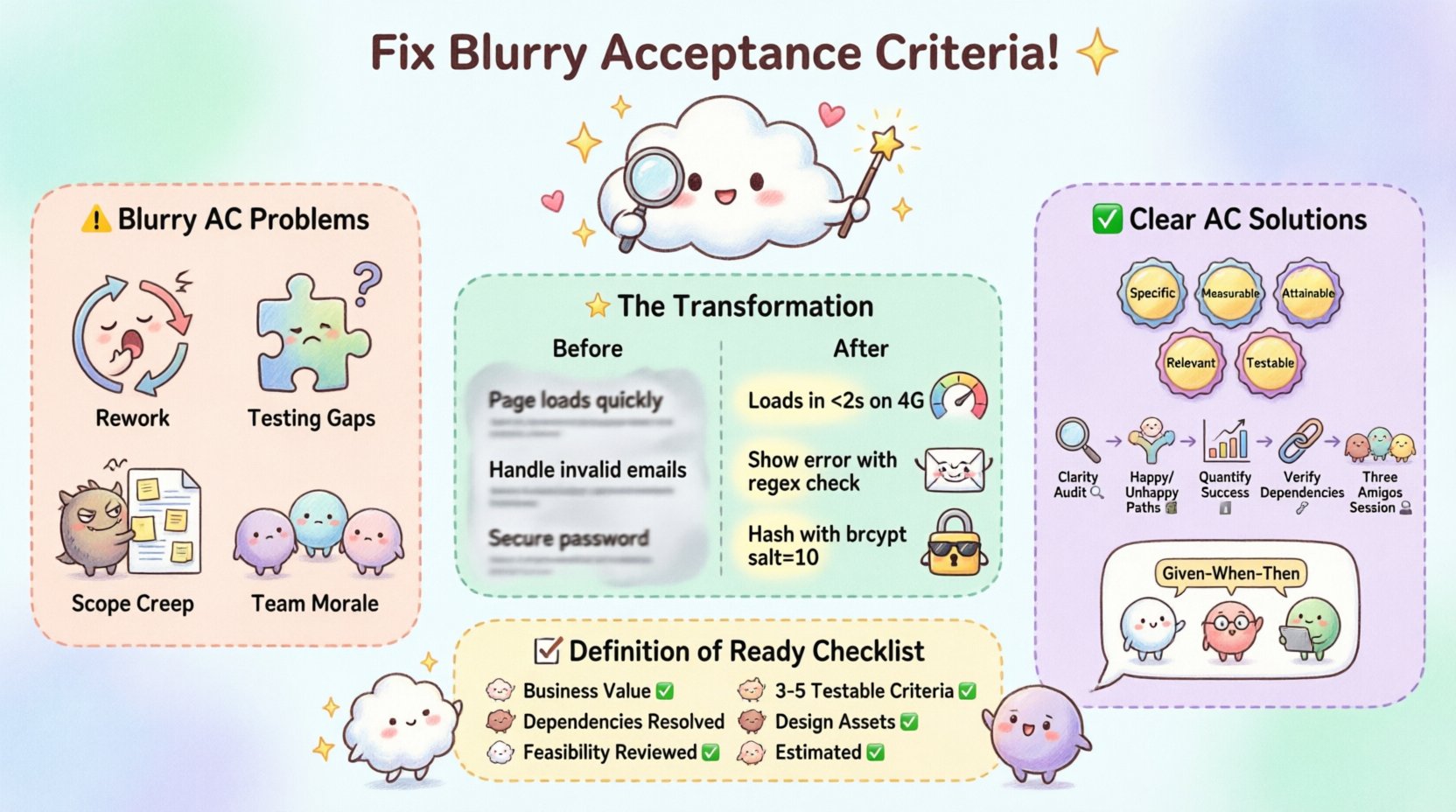

Ambiguity manifests in several destructive ways:

Rework: Developers build what they think is right, only to be told it is wrong later.

Testing Gaps: QA engineers cannot create comprehensive test cases without clear pass/fail conditions.

Scope Creep: Vague boundaries allow stakeholders to add features incrementally without formal change requests.

Team Morale: Constant back-and-forth communication creates friction and slows down the team’s velocity.

When acceptance criteria are blurry, the developer becomes a guesser. The tester becomes an investigator. The stakeholder becomes a critic. Clear acceptance criteria turn everyone into collaborators. They define the contract of work.

🔍 Identifying Symptoms of Blurry Acceptance Criteria

Before you can fix the problem, you must be able to spot it. Vague criteria often hide behind well-intentioned language that sounds professional but lacks precision. Look for these red flags in your backlog.

1. Subjective Adjectives

Words like fast, easy, user-friendly, or intuitive are subjective. One person’s fast is another’s slow. One person’s intuitive is another’s confusing. These terms cannot be tested objectively.

2. Missing Edge Cases

Does the story cover what happens when the internet cuts out? What if the database is down? What if the user enters a negative number? A story that only describes the happy path is incomplete. Real-world software must handle the unhappy paths gracefully.

3. Lack of Quantifiable Metrics

Without numbers, success is a matter of opinion. If a story says “improve performance,” how do you know when it is done? Is it 100ms? 500ms? 1 second? You need specific thresholds.

4. Hidden Dependencies

Sometimes the criteria rely on external systems that are not mentioned. “The report generates” implies data exists. But where does the data come from? If that dependency is unclear, the story cannot be implemented.

5. Over-Technical Language

Conversely, acceptance criteria that are too technical alienate stakeholders. They should describe behavior, not implementation details. “The system uses a Redis cache” is an implementation detail. “The system responds in under 200ms for repeated requests” is a behavior.

🧩 The Anatomy of Clear Acceptance Criteria

To troubleshoot effectively, you must understand the target state. Clear acceptance criteria share specific characteristics that make them testable, achievable, and valuable. They act as the contract between the business and the technical team.

Consider the following principles when crafting criteria:

Specific: Avoid generalizations. State exactly what is required.

Measurable: Use numbers, dates, or binary states (yes/no).

Attainable: Ensure the criteria can be met within the sprint capacity.

Relevant: Does this directly support the user goal?

Testable: Can a QA engineer verify this without asking for clarification?

When these elements align, the story becomes a reliable delivery mechanism. It removes the guesswork and replaces it with verification.

🛠 How to Fix Your User Stories Before Coding Starts

Now that we understand the problem and the solution, let’s apply the fix. This section outlines a step-by-step process to audit and refine your user stories. This process should happen during backlog refinement or grooming sessions, well before the sprint begins.

Step 1: The Clarity Audit

Review every story in your upcoming sprint. Read the acceptance criteria out loud as if you are reading a legal contract. If a phrase makes you pause or ask a question, it is a candidate for revision. Highlight every adjective and every vague verb. Challenge every assumption.

Step 2: Define the Happy and Unhappy Paths

For each story, explicitly list the happy path (what happens when everything goes right) and the unhappy paths (errors, timeouts, invalid inputs). Do not assume the happy path is the only one that matters. The unhappy path often reveals the most complex logic.

Step 3: Quantify Success

Replace every subjective term with a metric. Change “fast loading” to “load within 2 seconds on 4G.” Change “accurate data” to “data must match the source database within 0.01% variance.” If a metric cannot be defined, the story is likely not ready.

Step 4: Verify Dependencies

Identify every external system, API, or data source the story interacts with. Confirm that these dependencies are available and documented. If a story depends on a feature that does not exist yet, split the story or move it to a later sprint.

Step 5: The Three Amigos Session

Bring together the Business Owner (Product), the Developer, and the Tester. This collaboration is crucial. The Business Owner ensures the criteria match the user need. The Developer ensures the criteria are technically feasible. The Tester ensures the criteria are testable. This trio can identify gaps in minutes that a single person might miss for days.

📊 Comparison: Vague vs. Specific Criteria

Visualizing the difference helps reinforce the concept. Below is a table comparing typical vague criteria against their refined, actionable counterparts.

Category | ❌ Blurry / Vague | ✅ Clear / Actionable |

|---|---|---|

Performance | Page loads quickly. | Page loads in under 2 seconds on standard 4G connection. |

Input Validation | Handle invalid emails. | Display error message “Please enter a valid email” if format does not match regex ^[^\s@]+@[^\s@]+\.[^\s@]+$. |

Security | Secure the password. | Password must be hashed using bcrypt with a salt cost of 10 before storage. |

UI Behavior | Button looks good. | Button is 48×48 pixels, uses brand primary color #0055FF, and changes opacity to 50% on hover. |

Data | Save user data. | System saves user profile to database within 500ms and returns status code 201 Created. |

🤝 Collaboration and Communication

Even with the best guidelines, human communication remains the weakest link. Collaboration is not a one-time meeting; it is a continuous process. Here are specific techniques to maintain clarity throughout the lifecycle.

1. Use Examples (Gherkin Syntax)

While not mandatory, using behavior-driven development (BDD) syntax like Given-When-Then forces specificity. It structures the criteria into a logical flow.

Given the user is on the login page

When the user enters a valid username and password

Then the system redirects to the dashboard

This format leaves little room for interpretation regarding the sequence of events.

2. Visual Aids

Text alone is often insufficient. Wireframes, mockups, or flowcharts can clarify UI interactions and data flows. A picture of the error state is often worth a thousand words of explanation. Attach these artifacts directly to the user story.

3. Acceptance Testing First

Encourage the team to write the test cases before coding begins. If you cannot write a test case, you cannot define the acceptance criteria. This practice, known as Test-Driven Development (TDD), ensures the criteria are verifiable.

4. Regular Refinement Cycles

Do not wait until the sprint starts to refine stories. Dedicate time every week to review the backlog. Stories should be “ready” before they enter a sprint. If a story enters a sprint with blurry criteria, it is a sign of process failure, not just a bad story.

📝 The Definition of Ready (DoR)

To institutionalize this quality, implement a Definition of Ready. This is a checklist that a story must pass before it is considered eligible for a sprint. It acts as a gatekeeper to prevent blurry stories from entering the development pipeline.

Your DoR checklist might include:

Business Value: Is the value to the user clearly articulated?

Acceptance Criteria: Are there at least 3-5 specific, testable criteria?

Dependencies: Are all external dependencies identified and resolved?

Design Assets: Are UI mockups or wireframes attached?

Technical Feasibility: Has the team reviewed the story for technical constraints?

Estimates: Is the story estimated by the development team?

If a story does not meet these criteria, it stays in the backlog. It does not matter how urgent the stakeholder thinks it is. A story that cannot be defined cannot be delivered. This protects the team from burnout and ensures consistent quality.

🚫 Common Pitfalls to Avoid

Avoiding mistakes is as important as knowing best practices. Here are common traps teams fall into when trying to fix acceptance criteria.

1. Over-Engineering

Do not write acceptance criteria for features that might never be built. Keep the criteria focused on the MVP (Minimum Viable Product). If you detail every edge case for a future feature, you waste time that could be spent on current delivery.

2. Copy-Pasting Criteria

Do not reuse acceptance criteria from previous stories without checking context. A “login” story for a mobile app differs from a desktop app. A “search” story for an internal tool differs from a public e-commerce site. Context changes requirements.

3. Ignoring Non-Functional Requirements

Functional requirements (what the system does) are only half the battle. Non-functional requirements (how the system performs) are often where the ambiguity lies. Always include performance, security, and accessibility criteria.

4. Writing Implementation Details

Remember the boundary between behavior and implementation. “Click the submit button” is behavior. “Submit the form via POST request to /api/submit” is implementation. Focus on the behavior. The implementation can change without changing the acceptance criteria.

🔮 Long-Term Impact on Quality

Investing time in fixing acceptance criteria yields compounding returns. Over time, the team builds a library of clear, reusable criteria patterns. New team members can onboard faster because the stories are self-documenting. The velocity of the team increases because there is less rework.

Furthermore, the relationship between business and technical teams improves. Stakeholders trust the team to deliver exactly what was agreed upon. Developers feel confident because they have clear instructions. QA engineers can work efficiently because they have a clear plan.

This stability allows the team to focus on innovation rather than clarification. It shifts the culture from reactive problem-solving to proactive quality assurance. The cost of quality drops because defects are prevented, not detected.

🛡 Final Thoughts on Precision

Software development is an exercise in precision. Every character typed, every condition evaluated, and every interaction designed carries weight. Ambiguity is the enemy of precision. By rigorously applying these troubleshooting steps to your acceptance criteria, you secure the foundation of your delivery.

Remember, a user story without clear acceptance criteria is not a story; it is a request for a guess. Do not ask your team to guess. Demand clarity. Build the contract. Deliver the value. The difference between a successful project and a failed one often lies in the lines of text that define success.

Start today. Review your backlog. Find the blurriest story. Apply the steps outlined in this guide. Transform it into a clear, actionable, and testable unit of work. That is how you build software that works.