In the landscape of Agile development, the user story serves as the fundamental unit of value delivery. It is a promise of functionality, but a promise alone is rarely enough to build trust. The bridge between a vague idea and a shipped feature is the acceptance criteria. These criteria act as the contract between stakeholders, product owners, and the development team. They define the conditions under which a story is considered complete.

Yet, despite their critical importance, teams frequently struggle with writing effective acceptance criteria. Poorly defined criteria lead to rework, missed deadlines, and frustrated stakeholders. This guide explores the most common pitfalls found in user story acceptance criteria and provides actionable strategies to correct them swiftly. By addressing these issues, teams can improve velocity and quality without adding unnecessary overhead.

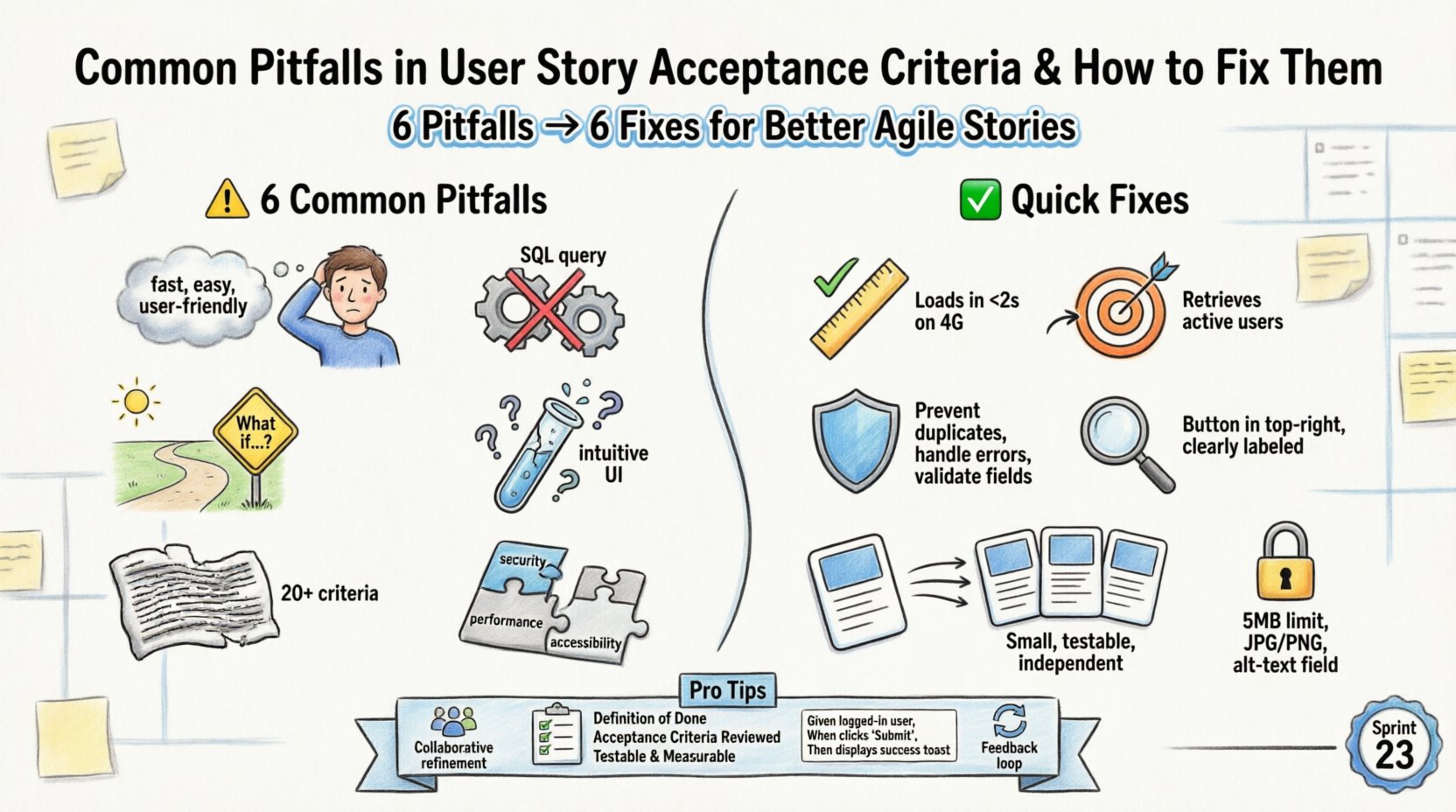

1. Ambiguity and Vague Language 🗣️

The most pervasive issue in acceptance criteria is ambiguity. When terms are subjective, developers and testers interpret them differently. This leads to the classic scenario where a developer marks a story as done, only for the tester to find it does not meet expectations. Words like fast, easy, secure, or user-friendly are red flags.

- The Problem: A criterion states: “The system should load quickly.”

- The Impact: Does quick mean 1 second? 5 seconds? 10 seconds? Without a metric, the story cannot be verified objectively.

- The Fix: Replace subjective adjectives with quantifiable metrics.

Consider this refined version: “The dashboard loads within 2 seconds on a 4G connection.” This removes the guesswork. It provides a clear pass or fail condition for the testing phase. Clarity reduces the need for clarification questions during sprint reviews, saving time for everyone involved.

2. Focusing on Implementation Instead of Behavior 🔧

Acceptance criteria should describe what the system does, not how it does it. When criteria include technical implementation details, they limit the flexibility of the development team. This approach creates a dependency on specific technologies or database structures that might change later.

- The Problem: A criterion states: “The application must use a SQL query to fetch the user list from the database.”

- The Impact: If the team decides to switch to a NoSQL database or an API gateway later, the acceptance criteria become invalid. It restricts technical decision-making.

- The Fix: Focus on the outcome. The criterion should be: “The application retrieves a list of active users based on the search filters provided.”

This shift allows developers to choose the most efficient method to achieve the result. It also keeps the criteria stable even if the underlying architecture evolves. The goal is to define the user experience, not the code structure.

3. The Happy Path Only 🌞

Many teams write acceptance criteria that only cover the ideal scenario. This is known as the “happy path.” It assumes the user enters perfect data, the network is stable, and no errors occur. While this covers the primary flow, it ignores the reality of software usage.

- The Problem: A criterion states: “When the user clicks submit, the order is saved.”

- The Impact: What happens if the user clicks submit twice? What if the internet disconnects mid-transmission? What if a field is left empty? These scenarios often lead to bugs in production.

- The Fix: Explicitly include edge cases and error conditions.

A robust criterion set would include:

- If the submit button is clicked twice, the system prevents duplicate entries.

- If the network fails, a persistent error message is displayed with a retry option.

- If a required field is missing, the specific field is highlighted with a clear error message.

Covering these scenarios early prevents critical failures later. It ensures the software is resilient.

4. Lack of Testability 🧪

If you cannot write a test for it, you cannot verify it. Acceptance criteria must be testable. This does not necessarily mean automated tests immediately, but the condition must be observable and verifiable by a human tester or a script.

- The Problem: A criterion states: “The user interface should be intuitive.”

- The Impact: How do you measure intuition? You cannot automate this. It relies on personal opinion, leading to subjective reviews.

- The Fix: Define observable behaviors.

Instead of “intuitive,” use: “The primary action button is located in the top right corner and is clearly labeled.” A tester can visually inspect this and confirm it exists. Testability is the cornerstone of quality assurance. It ensures that the Definition of Done is met consistently across different stories.

5. Over-Complexity and Bloat 🤯

While clarity is key, too much detail can be just as harmful. A user story with twenty acceptance criteria is often a sign that the story is too large. It suggests the story should be split into smaller, more manageable chunks.

- The Problem: A story contains criteria for multiple distinct features, such as login, profile update, and password reset.

- The Impact: The story becomes hard to estimate, hard to test, and hard to deploy. If one part fails, the whole story is blocked. This violates the principle of independent stories.

- The Fix: Split the story into multiple user stories.

Each story should deliver a slice of value on its own. If you have ten criteria, ask if they can be grouped into two separate stories of five criteria each. This improves flow and reduces risk.

6. Ignoring Non-Functional Requirements ⚙️

Functional criteria describe what the system does. Non-functional requirements describe how the system performs. Teams often focus solely on functionality and neglect performance, security, and accessibility.

- The Problem: A criterion states: “Users can upload a profile picture.”

- The Impact: The feature works, but what if the image is 50MB? It might crash the server. What if the file type is executable? It could be a security risk. What if the user is blind? They cannot see the image.

- The Fix: Include constraints in the criteria.

Refined criteria should specify:

- File size limit: Maximum 5MB.

- Supported formats: JPG, PNG, GIF.

- Accessibility: Image must have an alt text field available.

Neglecting these requirements often results in post-launch hotfixes. Integrating them into the acceptance criteria ensures quality is built in from the start.

Comparison: Bad Criteria vs. Refined Criteria

Visualizing the difference helps teams understand the goal. The table below contrasts common mistakes with improved versions.

| Category | Bad Example | Refined Example |

|---|---|---|

| Ambiguity | “Page loads fast.” | “Page loads in under 2 seconds on 4G.” |

| Technical | “Use Redis cache.” | “Data is retrieved from cache if available.” |

| Happy Path | “Login succeeds.” | “Login succeeds with valid credentials; fails with invalid credentials.” |

| Testability | “System is secure.” | “Passwords are hashed using bcrypt before storage.” |

| NFRs | “File upload works.” | “File upload accepts PDFs under 10MB.” |

Strategies to Fix Criteria Quickly 🛠️

Identifying the problems is only half the battle. Implementing a fix requires a change in process and culture. Here are practical steps to improve acceptance criteria without slowing down the team.

1. Collaborative Refinement Sessions

Acceptance criteria should not be written in isolation by the product owner. They should be a collaborative effort involving developers, testers, and stakeholders. During refinement meetings, ask the “How” and “What” questions.

- Ask the Tester: “How would you break this? What are the edge cases?”

- Ask the Developer: “What are the technical constraints we need to consider?”

- Ask the Stakeholder: “Is this the most important behavior to prioritize?”

This three-way collaboration ensures that all perspectives are considered before the sprint begins. It reduces the likelihood of missing critical requirements later.

2. Establish a Definition of Done (DoD)

Acceptance criteria are specific to a story, but the Definition of Done is global. It applies to every story in the backlog. A robust DoD includes items like code review, unit testing, and documentation.

- Ensure the DoD is visible and accessible.

- Require that acceptance criteria meet the DoD standards.

- Review the DoD periodically to ensure it remains relevant.

When the DoD is clear, the team knows the baseline quality required. This prevents stories from being marked as done when they are technically incomplete.

3. Use Standardized Formats

Consistency improves readability. Adopting a standard format like Given-When-Then (Gherkin) can help structure the criteria logically. While full BDD (Behavior Driven Development) is not always necessary, the structure encourages thinking in scenarios.

- Given: The initial context or state.

- When: The action taken by the user.

- Then: The expected outcome.

Example: “Given a logged-in user, When they click logout, Then they are redirected to the login page.” This structure makes it easier to translate criteria into automated tests later.

4. Regular Review and Feedback Loops

Acceptance criteria are not set in stone. They should evolve based on feedback. After a sprint review, look at the stories that caused confusion or rework.

- Identify which criteria were vague.

- Update the backlog items to reflect the lessons learned.

- Share these lessons with the wider team to prevent repetition.

Continuous improvement is key. By treating acceptance criteria as living documents, teams can adapt to changing requirements while maintaining clarity.

Building a Quality Culture 🏗️

Ultimately, writing good acceptance criteria is a cultural challenge, not just a process one. It requires a shift in mindset from “getting it done” to “getting it right.”

- Psychological Safety: Team members must feel safe to question vague criteria without fear of judgment. If a developer says, “I don’t understand this requirement,” it should be welcomed.

- Shared Ownership: Everyone owns the quality of the product. The product owner writes the criteria, but the whole team is responsible for verifying them.

- Focus on Value: Remember that the goal is to deliver value to the user. Criteria that do not contribute to user value should be questioned or removed.

When quality is a shared responsibility, the need for policing decreases. The team naturally seeks clarity and precision in their work. This leads to higher morale and better products.

Measuring Success

How do you know if your acceptance criteria are improving? Look at the following metrics over time.

- Rework Rate: The percentage of stories returned due to incomplete criteria.

- Clarification Time: Time spent discussing requirements during development.

- Defect Leakage: The number of bugs found in production that should have been caught by the criteria.

Tracking these metrics helps identify trends. If rework decreases, your criteria are likely becoming more precise. If clarification time drops, the team is spending less energy guessing and more energy building.

Final Thoughts on Criteria Quality

Improving user story acceptance criteria is an ongoing journey. It requires discipline, collaboration, and a willingness to challenge the status quo. By avoiding ambiguity, focusing on behavior, and including edge cases, teams can build software that meets expectations consistently.

The effort invested in writing clear criteria pays dividends in reduced rework, faster delivery, and happier customers. It transforms the acceptance criteria from a bureaucratic hurdle into a powerful tool for quality assurance. Start with one story. Refine the criteria. Measure the outcome. Repeat. Over time, these small changes compound into significant improvements in team performance.